You signed in with another tab or window. Reload to refresh your session.You signed out in another tab or window. Reload to refresh your session.You switched accounts on another tab or window. Reload to refresh your session.Dismiss alert

The pytorch version loss function is referenced from here.

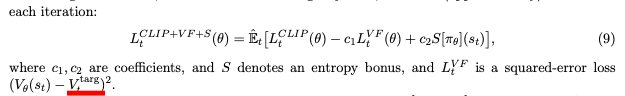

The algorithm we used on both ADL and MLDS is PPO2, which is different from the original PPO.

You can find the difference between them in the following figure, and learn more details in the online videos (part 1, part 2) lectured by Hung-Yi Lee.

想請問下PPO value loss的計算方法,因為PPO paper上好像沒定義很清楚squared-error loss的計算( 有點不太能理解?)

我發現你在 ADL, MLDS 兩邊的PPO squared-error loss 計算方式不大一樣,然後我看了網路上很多寫法(tensorlayer等等)也都不大相同,想請問下這方便你是怎麼理解的?謝謝

ADL hw3 (agent_pg.py)

loss = -torch.min(surr1, surr2) + 1.0 * nn.MSELoss()(state_values, rewards)- 0.01 * entropy

MLDS hw4 (agent_pg.py)

loss_vf = tf.squared_difference(self.rewards + self.gamma * self.v_preds_next, v_preds)

The text was updated successfully, but these errors were encountered: